Generalization, not storage

Episodes are consolidated into generalized concepts with confidence, conditions, and exceptions. Not another vector store.

A memory layer that consolidates raw experiences into generalized knowledge. Episodes in, concepts out — like how your brain works overnight.

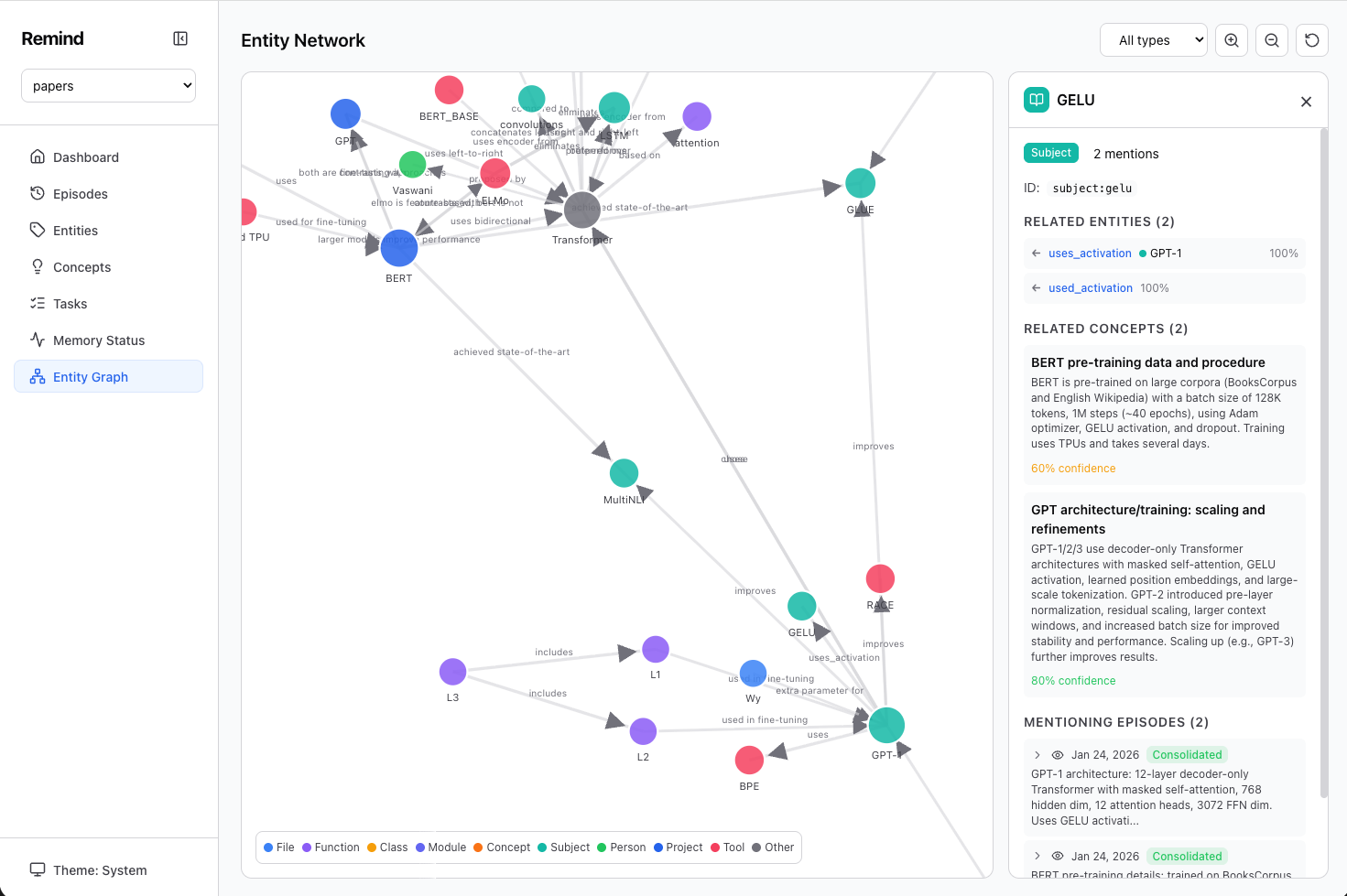

Entity graph built from ingesting research papers — concepts, tools, and their relationships, all extracted automatically.

The same consolidation loop your brain runs during sleep, applied to AI memory.

Your agent stores raw experiences as episodes. Fast — no LLM calls.

remind remember "User prefers Rust for systems work"

remind remember "Chose PostgreSQL over MySQL for the user store" -t decisionRemind's "sleep" process. The LLM reviews episodes, finds patterns, extracts entities, and creates generalized concepts with relations.

remind consolidateSpreading activation retrieval — not just keyword matching. Queries activate matching concepts, which activate related concepts through the graph.

remind recall "What tech stack decisions have we made?"Returns generalized concepts like "User gravitates toward statically typed, performance-oriented languages" — not a list of raw transcripts.

Persistent context across coding sessions. Your agent remembers preferences, decisions, and project architecture.

Ongoing debates across sessions. Remind tracks arguments, positions, and open threads.

Feed in papers, find commonalities and contradictions. Consolidation surfaces themes across sources.